![Biopython Logo [Biopython Logo]](images/biopython.jpg)

![Biopython Logo [Biopython Logo]](images/biopython.jpg)

Biopython Tutorial and CookbookJeff Chang, Brad Chapman, Iddo Friedberg, Thomas Hamelryck, |

The Biopython Project is an international association of developers of freely available Python (http://www.python.org) tools for computational molecular biology. Python is an object oriented, interpreted, flexible language that is becoming increasingly popular for scientific computing. Python is easy to learn, has a very clear syntax and can easily be extended with modules written in C, C++ or FORTRAN.

The Biopython web site (http://www.biopython.org) provides an online resource for modules, scripts, and web links for developers of Python-based software for bioinformatics use and research. Basically, the goal of Biopython is to make it as easy as possible to use Python for bioinformatics by creating high-quality, reusable modules and classes. Biopython features include parsers for various Bioinformatics file formats (BLAST, Clustalw, FASTA, Genbank,...), access to online services (NCBI, Expasy,...), interfaces to common and not-so-common programs (Clustalw, DSSP, MSMS...), a standard sequence class, various clustering modules, a KD tree data structure etc. and even documentation.

Basically, we just like to program in Python and want to make it as easy as possible to use Python for bioinformatics by creating high-quality, reusable modules and scripts.

The main Biopython releases have lots of functionality, including:

We hope this gives you plenty of reasons to download and start using Biopython!

All of the installation information for Biopython was separated from this document to make it easier to keep updated.

The short version is go to our downloads page (http://biopython.org/wiki/Download), download and install the listed dependencies, then download and install Biopython. Biopython runs on many platforms (Windows, Mac, and on the various flavors of Linux and Unix). For Windows we provide pre-compiled click-and-run installers, while for Unix and other operating systems you must install from source as described in the included README file. This is usually as simple as the standard commands:

python setup.py build python setup.py test sudo python setup.py install

(You can in fact skip the build and test, and go straight to the install – but its better to make sure everything seems to be working.)

The longer version of our installation instructions covers installation of Python, Biopython dependencies and Biopython itself. It is available in PDF (http://biopython.org/DIST/docs/install/Installation.pdf) and HTML formats (http://biopython.org/DIST/docs/install/Installation.html).

Bio.PDB: [18, Hamelryck and Manderick, 2003];

Bio.Cluster: [14, De Hoon et al., 2004];

Bio.Graphics.GenomeDiagram: [2, Pritchard et al., 2006];

Bio.Phylo and Bio.Phylo.PAML: [9, Talevich et al., 2012];

For example, this will only work under Python 2:

>>> print "Hello World!" Hello World!

If you try that on Python 3 you’ll get a SyntaxError.

Under Python 3 you must write:

>>> print("Hello World!")

Hello World!

Surprisingly that will also work on Python 2 – but only for simple examples printing one thing. In general you need to add this magic line to the start of your Python scripts to use the print function under Python 2.6 and 2.7:

from __future__ import print_function

If you forget to add this magic import, under Python 2 you’ll see extra brackets produced by trying to use the print function when Python 2 is interpreting it as a print statement and a tuple.

>>> import Bio >>> print(Bio.__version__) ...If the “

import Bio” line fails, Biopython is not installed.

If the second line fails, your version is very out of date.

If the version string ends with a plus, you don’t have an official

release, but a snapshot of the in development code. Seq object missing the upper & lower methods described in this Tutorial? str(my_seq).upper() to get an upper case string.

If you need a Seq object, try Seq(str(my_seq).upper()) but be careful about blindly re-using the same alphabet.Seq object translation method support the cds option described in this Tutorial? Bio.SeqIO and Bio.AlignIO read and write? Bio.SeqIO and Bio.AlignIO functions parse, read and write take filenames? They insist on handles! Bio.SeqIO.write() and Bio.AlignIO.write() functions accept a single record or alignment? They insist on a list or iterator! [...] to create a list of one element.str(...) give me the full sequence of a Seq object? Bio.Blast work with the latest plain text NCBI blast output? Bio.Entrez.parse() work? The module imports fine but there is no parse function! Bio.Entrez.efetch() stopped working? retmode="text" to

your call.

Second, they are now stricter about how to provide a list of IDs – Biopython 1.59 onwards

turns a list into a comma separated string automatically.Bio.Blast.NCBIWWW.qblast() give the same results as the NCBI BLAST website? Bio.Blast.NCBIXML.read() work? The module imports but there is no read function! SeqRecord object have a letter_annotations attribute? SeqRecord to get a sub-record? SeqRecord objects together? Bio.SeqIO.convert() or Bio.AlignIO.convert() work? The modules import fine but there is no convert function! parse and write

functions as described in this tutorial (see Sections 5.5.2 and 6.2.1).Bio.SeqIO.index() work? The module imports fine but there is no index function! Bio.SeqIO.index_db() work? The module imports fine but there is no index_db function! MultipleSeqAlignment object? The Bio.Align module imports fine but this class isn’t there! Bio.Align.Generic.Alignment class supports some of its functionality, but using this is now discouraged.subprocess module directly.__init__.py files. If you are not used to looking for code in this file this can be confusing. The reason we do this is to make the imports easier for users. For instance, instead of having to do a “repetitive” import like from Bio.GenBank import GenBank, you can just use from Bio import GenBank.For more general questions, the Python FAQ pages http://www.python.org/doc/faq/ may be useful.

This section is designed to get you started quickly with Biopython, and to give a general overview of what is available and how to use it. All of the examples in this section assume that you have some general working knowledge of Python, and that you have successfully installed Biopython on your system. If you think you need to brush up on your Python, the main Python web site provides quite a bit of free documentation to get started with (http://www.python.org/doc/).

Since much biological work on the computer involves connecting with databases on the internet, some of the examples will also require a working internet connection in order to run.

Now that that is all out of the way, let’s get into what we can do with Biopython.

As mentioned in the introduction, Biopython is a set of libraries to provide the ability to deal with “things” of interest to biologists working on the computer. In general this means that you will need to have at least some programming experience (in Python, of course!) or at least an interest in learning to program. Biopython’s job is to make your job easier as a programmer by supplying reusable libraries so that you can focus on answering your specific question of interest, instead of focusing on the internals of parsing a particular file format (of course, if you want to help by writing a parser that doesn’t exist and contributing it to Biopython, please go ahead!). So Biopython’s job is to make you happy!

One thing to note about Biopython is that it often provides multiple ways of “doing the same thing.” Things have improved in recent releases, but this can still be frustrating as in Python there should ideally be one right way to do something. However, this can also be a real benefit because it gives you lots of flexibility and control over the libraries. The tutorial helps to show you the common or easy ways to do things so that you can just make things work. To learn more about the alternative possibilities, look in the Cookbook (Chapter 18, this has some cools tricks and tips), the Advanced section (Chapter 20), the built in “docstrings” (via the Python help command, or the API documentation) or ultimately the code itself.

Disputably (of course!), the central object in bioinformatics is the sequence. Thus, we’ll start with a quick introduction to the Biopython mechanisms for dealing with sequences, the Seq object, which we’ll discuss in more detail in Chapter 3.

Most of the time when we think about sequences we have in my mind a string of letters like ‘AGTACACTGGT’. You can create such Seq object with this sequence as follows - the “>>>” represents the Python prompt followed by what you would type in:

>>> from Bio.Seq import Seq

>>> my_seq = Seq("AGTACACTGGT")

>>> my_seq

Seq('AGTACACTGGT', Alphabet())

>>> print(my_seq)

AGTACACTGGT

>>> my_seq.alphabet

Alphabet()

What we have here is a sequence object with a generic alphabet - reflecting the fact we have not specified if this is a DNA or protein sequence (okay, a protein with a lot of Alanines, Glycines, Cysteines and Threonines!). We’ll talk more about alphabets in Chapter 3.

In addition to having an alphabet, the Seq object differs from the Python string in the methods it supports. You can’t do this with a plain string:

>>> my_seq

Seq('AGTACACTGGT', Alphabet())

>>> my_seq.complement()

Seq('TCATGTGACCA', Alphabet())

>>> my_seq.reverse_complement()

Seq('ACCAGTGTACT', Alphabet())

The next most important class is the SeqRecord or Sequence Record. This holds a sequence (as a Seq object) with additional annotation including an identifier, name and description. The Bio.SeqIO module for reading and writing sequence file formats works with SeqRecord objects, which will be introduced below and covered in more detail by Chapter 5.

This covers the basic features and uses of the Biopython sequence class. Now that you’ve got some idea of what it is like to interact with the Biopython libraries, it’s time to delve into the fun, fun world of dealing with biological file formats!

Before we jump right into parsers and everything else to do with Biopython, let’s set up an example to motivate everything we do and make life more interesting. After all, if there wasn’t any biology in this tutorial, why would you want you read it?

Since I love plants, I think we’re just going to have to have a plant based example (sorry to all the fans of other organisms out there!). Having just completed a recent trip to our local greenhouse, we’ve suddenly developed an incredible obsession with Lady Slipper Orchids (if you wonder why, have a look at some Lady Slipper Orchids photos on Flickr, or try a Google Image Search).

Of course, orchids are not only beautiful to look at, they are also extremely interesting for people studying evolution and systematics. So let’s suppose we’re thinking about writing a funding proposal to do a molecular study of Lady Slipper evolution, and would like to see what kind of research has already been done and how we can add to that.

After a little bit of reading up we discover that the Lady Slipper Orchids are in the Orchidaceae family and the Cypripedioideae sub-family and are made up of 5 genera: Cypripedium, Paphiopedilum, Phragmipedium, Selenipedium and Mexipedium.

That gives us enough to get started delving for more information. So, let’s look at how the Biopython tools can help us. We’ll start with sequence parsing in Section 2.4, but the orchids will be back later on as well - for example we’ll search PubMed for papers about orchids and extract sequence data from GenBank in Chapter 9, extract data from Swiss-Prot from certain orchid proteins in Chapter 10, and work with ClustalW multiple sequence alignments of orchid proteins in Section 6.4.1.

A large part of much bioinformatics work involves dealing with the many types of file formats designed to hold biological data. These files are loaded with interesting biological data, and a special challenge is parsing these files into a format so that you can manipulate them with some kind of programming language. However the task of parsing these files can be frustrated by the fact that the formats can change quite regularly, and that formats may contain small subtleties which can break even the most well designed parsers.

We are now going to briefly introduce the Bio.SeqIO module – you can find out more in Chapter 5. We’ll start with an online search for our friends, the lady slipper orchids. To keep this introduction simple, we’re just using the NCBI website by hand. Let’s just take a look through the nucleotide databases at NCBI, using an Entrez online search (http://www.ncbi.nlm.nih.gov:80/entrez/query.fcgi?db=Nucleotide) for everything mentioning the text Cypripedioideae (this is the subfamily of lady slipper orchids).

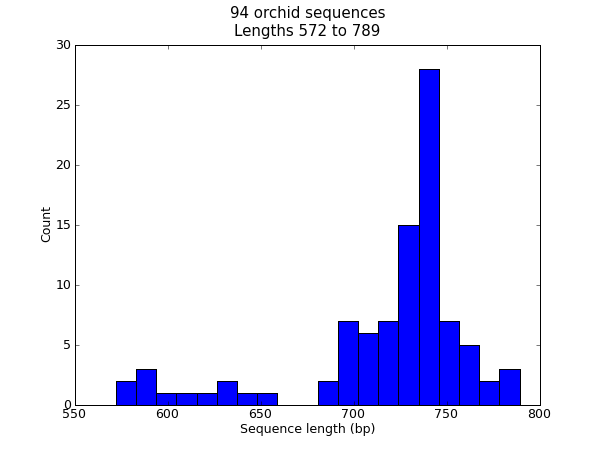

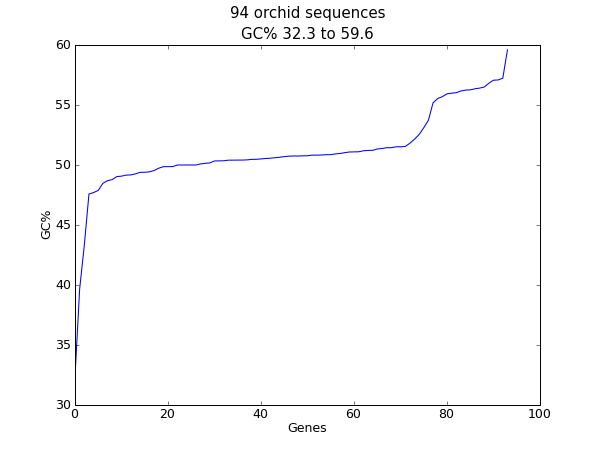

When this tutorial was originally written, this search gave us only 94 hits, which we saved as a FASTA formatted text file and as a GenBank formatted text file (files ls_orchid.fasta and ls_orchid.gbk, also included with the Biopython source code under docs/tutorial/examples/).

If you run the search today, you’ll get hundreds of results! When following the tutorial, if you want to see the same list of genes, just download the two files above or copy them from docs/examples/ in the Biopython source code. In Section 2.5 we will look at how to do a search like this from within Python.

If you open the lady slipper orchids FASTA file ls_orchid.fasta in your favourite text editor, you’ll see that the file starts like this:

>gi|2765658|emb|Z78533.1|CIZ78533 C.irapeanum 5.8S rRNA gene and ITS1 and ITS2 DNA CGTAACAAGGTTTCCGTAGGTGAACCTGCGGAAGGATCATTGATGAGACCGTGGAATAAACGATCGAGTG AATCCGGAGGACCGGTGTACTCAGCTCACCGGGGGCATTGCTCCCGTGGTGACCCTGATTTGTTGTTGGG ...

It contains 94 records, each has a line starting with “>” (greater-than symbol) followed by the sequence on one or more lines. Now try this in Python:

from Bio import SeqIO

for seq_record in SeqIO.parse("ls_orchid.fasta", "fasta"):

print(seq_record.id)

print(repr(seq_record.seq))

print(len(seq_record))

You should get something like this on your screen:

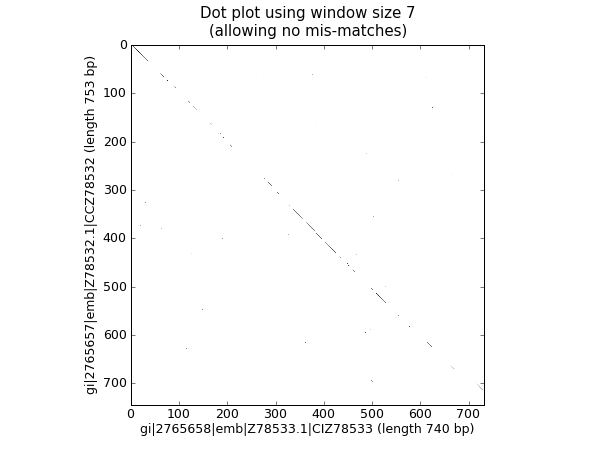

gi|2765658|emb|Z78533.1|CIZ78533

Seq('CGTAACAAGGTTTCCGTAGGTGAACCTGCGGAAGGATCATTGATGAGACCGTGG...CGC', SingleLetterAlphabet())

740

...

gi|2765564|emb|Z78439.1|PBZ78439

Seq('CATTGTTGAGATCACATAATAATTGATCGAGTTAATCTGGAGGATCTGTTTACT...GCC', SingleLetterAlphabet())

592

Notice that the FASTA format does not specify the alphabet, so Bio.SeqIO has defaulted to the rather generic SingleLetterAlphabet() rather than something DNA specific.

Now let’s load the GenBank file ls_orchid.gbk instead - notice that the code to do this is almost identical to the snippet used above for the FASTA file - the only difference is we change the filename and the format string:

from Bio import SeqIO

for seq_record in SeqIO.parse("ls_orchid.gbk", "genbank"):

print(seq_record.id)

print(repr(seq_record.seq))

print(len(seq_record))

This should give:

Z78533.1

Seq('CGTAACAAGGTTTCCGTAGGTGAACCTGCGGAAGGATCATTGATGAGACCGTGG...CGC', IUPACAmbiguousDNA())

740

...

Z78439.1

Seq('CATTGTTGAGATCACATAATAATTGATCGAGTTAATCTGGAGGATCTGTTTACT...GCC', IUPACAmbiguousDNA())

592

This time Bio.SeqIO has been able to choose a sensible alphabet, IUPAC Ambiguous DNA. You’ll also notice that a shorter string has been used as the seq_record.id in this case.

Biopython has a lot of parsers, and each has its own little special niches based on the sequence format it is parsing and all of that. Chapter 5 covers Bio.SeqIO in more detail, while Chapter 6 introduces Bio.AlignIO for sequence alignments.

While the most popular file formats have parsers integrated into Bio.SeqIO and/or Bio.AlignIO, for some of the rarer and unloved file formats there is either no parser at all, or an old parser which has not been linked in yet.

Please also check the wiki pages http://biopython.org/wiki/SeqIO and http://biopython.org/wiki/AlignIO for the latest information, or ask on the mailing list. The wiki pages should include an up to date list of supported file types, and some additional examples.

The next place to look for information about specific parsers and how to do cool things with them is in the Cookbook (Chapter 18 of this Tutorial). If you don’t find the information you are looking for, please consider helping out your poor overworked documentors and submitting a cookbook entry about it! (once you figure out how to do it, that is!)

One of the very common things that you need to do in bioinformatics is extract information from biological databases. It can be quite tedious to access these databases manually, especially if you have a lot of repetitive work to do. Biopython attempts to save you time and energy by making some on-line databases available from Python scripts. Currently, Biopython has code to extract information from the following databases:

Bio.SCOP.search() function.

The code in these modules basically makes it easy to write Python code that interact with the CGI scripts on these pages, so that you can get results in an easy to deal with format. In some cases, the results can be tightly integrated with the Biopython parsers to make it even easier to extract information.

Now that you’ve made it this far, you hopefully have a good understanding of the basics of Biopython and are ready to start using it for doing useful work. The best thing to do now is finish reading this tutorial, and then if you want start snooping around in the source code, and looking at the automatically generated documentation.

Once you get a picture of what you want to do, and what libraries in Biopython will do it, you should take a peak at the Cookbook (Chapter 18), which may have example code to do something similar to what you want to do.

If you know what you want to do, but can’t figure out how to do it, please feel free to post questions to the main Biopython list (see http://biopython.org/wiki/Mailing_lists). This will not only help us answer your question, it will also allow us to improve the documentation so it can help the next person do what you want to do.

Enjoy the code!

Biological sequences are arguably the central object in Bioinformatics, and in this chapter we’ll introduce the Biopython mechanism for dealing with sequences, the Seq object.

Chapter 4 will introduce the related SeqRecord object, which combines the sequence information with any annotation, used again in Chapter 5 for Sequence Input/Output.

Sequences are essentially strings of letters like AGTACACTGGT, which seems very natural since this is the most common way that sequences are seen in biological file formats.

There are two important differences between Seq objects and standard Python strings.

First of all, they have different methods. Although the Seq object supports many of the same methods as a plain string, its translate() method differs by doing biological translation, and there are also additional biologically relevant methods like reverse_complement().

Secondly, the Seq object has an important attribute, alphabet, which is an object describing what the individual characters making up the sequence string “mean”, and how they should be interpreted. For example, is AGTACACTGGT a DNA sequence, or just a protein sequence that happens to be rich in Alanines, Glycines, Cysteines

and Threonines?

The alphabet object is perhaps the important thing that makes the Seq object more than just a string. The currently available alphabets for Biopython are defined in the Bio.Alphabet module. We’ll use the IUPAC alphabets (http://www.chem.qmw.ac.uk/iupac/) here to deal with some of our favorite objects: DNA, RNA and Proteins.

Bio.Alphabet.IUPAC provides basic definitions for proteins, DNA and RNA, but additionally provides the ability to extend and customize the basic definitions. For instance, for proteins, there is a basic IUPACProtein class, but there is an additional ExtendedIUPACProtein class providing for the additional elements “U” (or “Sec” for selenocysteine) and “O” (or “Pyl” for pyrrolysine), plus the ambiguous symbols “B” (or “Asx” for asparagine or aspartic acid), “Z” (or “Glx” for glutamine or glutamic acid), “J” (or “Xle” for leucine isoleucine) and “X” (or “Xxx” for an unknown amino acid). For DNA you’ve got choices of IUPACUnambiguousDNA, which provides for just the basic letters, IUPACAmbiguousDNA (which provides for ambiguity letters for every possible situation) and ExtendedIUPACDNA, which allows letters for modified bases. Similarly, RNA can be represented by IUPACAmbiguousRNA or IUPACUnambiguousRNA.

The advantages of having an alphabet class are two fold. First, this gives an idea of the type of information the Seq object contains. Secondly, this provides a means of constraining the information, as a means of type checking.

Now that we know what we are dealing with, let’s look at how to utilize this class to do interesting work. You can create an ambiguous sequence with the default generic alphabet like this:

>>> from Bio.Seq import Seq

>>> my_seq = Seq("AGTACACTGGT")

>>> my_seq

Seq('AGTACACTGGT', Alphabet())

>>> my_seq.alphabet

Alphabet()

However, where possible you should specify the alphabet explicitly when creating your sequence objects - in this case an unambiguous DNA alphabet object:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> my_seq = Seq("AGTACACTGGT", IUPAC.unambiguous_dna)

>>> my_seq

Seq('AGTACACTGGT', IUPACUnambiguousDNA())

>>> my_seq.alphabet

IUPACUnambiguousDNA()

Unless of course, this really is an amino acid sequence:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> my_prot = Seq("AGTACACTGGT", IUPAC.protein)

>>> my_prot

Seq('AGTACACTGGT', IUPACProtein())

>>> my_prot.alphabet

IUPACProtein()

In many ways, we can deal with Seq objects as if they were normal Python strings, for example getting the length, or iterating over the elements:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> my_seq = Seq("GATCG", IUPAC.unambiguous_dna)

>>> for index, letter in enumerate(my_seq):

... print("%i %s" % (index, letter))

0 G

1 A

2 T

3 C

4 G

>>> print(len(my_seq))

5

You can access elements of the sequence in the same way as for strings (but remember, Python counts from zero!):

>>> print(my_seq[0]) #first letter G >>> print(my_seq[2]) #third letter T >>> print(my_seq[-1]) #last letter G

The Seq object has a .count() method, just like a string.

Note that this means that like a Python string, this gives a

non-overlapping count:

>>> from Bio.Seq import Seq

>>> "AAAA".count("AA")

2

>>> Seq("AAAA").count("AA")

2

For some biological uses, you may actually want an overlapping count (i.e. 3 in this trivial example). When searching for single letters, this makes no difference:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> my_seq = Seq('GATCGATGGGCCTATATAGGATCGAAAATCGC', IUPAC.unambiguous_dna)

>>> len(my_seq)

32

>>> my_seq.count("G")

9

>>> 100 * float(my_seq.count("G") + my_seq.count("C")) / len(my_seq)

46.875

While you could use the above snippet of code to calculate a GC%, note that the Bio.SeqUtils module has several GC functions already built. For example:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> from Bio.SeqUtils import GC

>>> my_seq = Seq('GATCGATGGGCCTATATAGGATCGAAAATCGC', IUPAC.unambiguous_dna)

>>> GC(my_seq)

46.875

Note that using the Bio.SeqUtils.GC() function should automatically cope with mixed case sequences and the ambiguous nucleotide S which means G or C.

Also note that just like a normal Python string, the Seq object is in some ways “read-only”. If you need to edit your sequence, for example simulating a point mutation, look at the Section 3.12 below which talks about the MutableSeq object.

A more complicated example, let’s get a slice of the sequence:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> my_seq = Seq("GATCGATGGGCCTATATAGGATCGAAAATCGC", IUPAC.unambiguous_dna)

>>> my_seq[4:12]

Seq('GATGGGCC', IUPACUnambiguousDNA())

Two things are interesting to note. First, this follows the normal conventions for Python strings. So the first element of the sequence is 0 (which is normal for computer science, but not so normal for biology). When you do a slice the first item is included (i.e. 4 in this case) and the last is excluded (12 in this case), which is the way things work in Python, but of course not necessarily the way everyone in the world would expect. The main goal is to stay consistent with what Python does.

The second thing to notice is that the slice is performed on the sequence data string, but the new object produced is another Seq object which retains the alphabet information from the original Seq object.

Also like a Python string, you can do slices with a start, stop and stride (the step size, which defaults to one). For example, we can get the first, second and third codon positions of this DNA sequence:

>>> my_seq[0::3]

Seq('GCTGTAGTAAG', IUPACUnambiguousDNA())

>>> my_seq[1::3]

Seq('AGGCATGCATC', IUPACUnambiguousDNA())

>>> my_seq[2::3]

Seq('TAGCTAAGAC', IUPACUnambiguousDNA())

Another stride trick you might have seen with a Python string is the use of a -1 stride to reverse the string. You can do this with a Seq object too:

>>> my_seq[::-1]

Seq('CGCTAAAAGCTAGGATATATCCGGGTAGCTAG', IUPACUnambiguousDNA())

If you really do just need a plain string, for example to write to a file, or insert into a database, then this is very easy to get:

>>> str(my_seq) 'GATCGATGGGCCTATATAGGATCGAAAATCGC'

Since calling str() on a Seq object returns the full sequence as a string,

you often don’t actually have to do this conversion explicitly.

Python does this automatically in the print function

(and the print statement under Python 2):

>>> print(my_seq) GATCGATGGGCCTATATAGGATCGAAAATCGC

You can also use the Seq object directly with a %s placeholder when using the Python string formatting or interpolation operator (%):

>>> fasta_format_string = ">Name\n%s\n" % my_seq >>> print(fasta_format_string) >Name GATCGATGGGCCTATATAGGATCGAAAATCGC <BLANKLINE>

This line of code constructs a simple FASTA format record (without worrying about line wrapping).

Section 4.5 describes a neat way to get a FASTA formatted

string from a SeqRecord object, while the more general topic of reading and

writing FASTA format sequence files is covered in Chapter 5.

>>> str(my_seq) 'GATCGATGGGCCTATATAGGATCGAAAATCGC'

Naturally, you can in principle add any two Seq objects together - just like you can with Python strings to concatenate them. However, you can’t add sequences with incompatible alphabets, such as a protein sequence and a DNA sequence:

>>> from Bio.Alphabet import IUPAC

>>> from Bio.Seq import Seq

>>> protein_seq = Seq("EVRNAK", IUPAC.protein)

>>> dna_seq = Seq("ACGT", IUPAC.unambiguous_dna)

>>> protein_seq + dna_seq

Traceback (most recent call last):

...

TypeError: Incompatible alphabets IUPACProtein() and IUPACUnambiguousDNA()

If you really wanted to do this, you’d have to first give both sequences generic alphabets:

>>> from Bio.Alphabet import generic_alphabet

>>> protein_seq.alphabet = generic_alphabet

>>> dna_seq.alphabet = generic_alphabet

>>> protein_seq + dna_seq

Seq('EVRNAKACGT', Alphabet())

Here is an example of adding a generic nucleotide sequence to an unambiguous IUPAC DNA sequence, resulting in an ambiguous nucleotide sequence:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import generic_nucleotide

>>> from Bio.Alphabet import IUPAC

>>> nuc_seq = Seq("GATCGATGC", generic_nucleotide)

>>> dna_seq = Seq("ACGT", IUPAC.unambiguous_dna)

>>> nuc_seq

Seq('GATCGATGC', NucleotideAlphabet())

>>> dna_seq

Seq('ACGT', IUPACUnambiguousDNA())

>>> nuc_seq + dna_seq

Seq('GATCGATGCACGT', NucleotideAlphabet())

You may often have many sequences to add together, which can be done with a for loop like this:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import generic_dna

>>> list_of_seqs = [Seq("ACGT", generic_dna), Seq("AACC", generic_dna), Seq("GGTT", generic_dna)]

>>> concatenated = Seq("", generic_dna)

>>> for s in list_of_seqs:

... concatenated += s

...

>>> concatenated

Seq('ACGTAACCGGTT', DNAAlphabet())

Or, a more elegant approach is to the use built in sum function with its optional start value argument (which otherwise defaults to zero):

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import generic_dna

>>> list_of_seqs = [Seq("ACGT", generic_dna), Seq("AACC", generic_dna), Seq("GGTT", generic_dna)]

>>> sum(list_of_seqs, Seq("", generic_dna))

Seq('ACGTAACCGGTT', DNAAlphabet())

Unlike the Python string, the Biopython Seq does not (currently) have a .join method.

Python strings have very useful upper and lower methods for changing the case.

As of Biopython 1.53, the Seq object gained similar methods which are alphabet aware.

For example,

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import generic_dna

>>> dna_seq = Seq("acgtACGT", generic_dna)

>>> dna_seq

Seq('acgtACGT', DNAAlphabet())

>>> dna_seq.upper()

Seq('ACGTACGT', DNAAlphabet())

>>> dna_seq.lower()

Seq('acgtacgt', DNAAlphabet())

These are useful for doing case insensitive matching:

>>> "GTAC" in dna_seq False >>> "GTAC" in dna_seq.upper() True

Note that strictly speaking the IUPAC alphabets are for upper case sequences only, thus:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> dna_seq = Seq("ACGT", IUPAC.unambiguous_dna)

>>> dna_seq

Seq('ACGT', IUPACUnambiguousDNA())

>>> dna_seq.lower()

Seq('acgt', DNAAlphabet())

For nucleotide sequences, you can easily obtain the complement or reverse

complement of a Seq object using its built-in methods:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> my_seq = Seq("GATCGATGGGCCTATATAGGATCGAAAATCGC", IUPAC.unambiguous_dna)

>>> my_seq

Seq('GATCGATGGGCCTATATAGGATCGAAAATCGC', IUPACUnambiguousDNA())

>>> my_seq.complement()

Seq('CTAGCTACCCGGATATATCCTAGCTTTTAGCG', IUPACUnambiguousDNA())

>>> my_seq.reverse_complement()

Seq('GCGATTTTCGATCCTATATAGGCCCATCGATC', IUPACUnambiguousDNA())

As mentioned earlier, an easy way to just reverse a Seq object (or a

Python string) is slice it with -1 step:

>>> my_seq[::-1]

Seq('CGCTAAAAGCTAGGATATATCCGGGTAGCTAG', IUPACUnambiguousDNA())

In all of these operations, the alphabet property is maintained. This is very useful in case you accidentally end up trying to do something weird like take the (reverse)complement of a protein sequence:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> protein_seq = Seq("EVRNAK", IUPAC.protein)

>>> protein_seq.complement()

Traceback (most recent call last):

...

ValueError: Proteins do not have complements!

The example in Section 5.5.3 combines the Seq

object’s reverse complement method with Bio.SeqIO for sequence input/output.

Before talking about transcription, I want to try and clarify the strand issue. Consider the following (made up) stretch of double stranded DNA which encodes a short peptide:

| DNA coding strand (aka Crick strand, strand +1) | ||

| 5’ | ATGGCCATTGTAATGGGCCGCTGAAAGGGTGCCCGATAG | 3’ |

| ||||||||||||||||||||||||||||||||||||||| | ||

| 3’ | TACCGGTAACATTACCCGGCGACTTTCCCACGGGCTATC | 5’ |

| DNA template strand (aka Watson strand, strand −1) | ||

| | | ||

| Transcription | ||

| ↓ | ||

| 5’ | AUGGCCAUUGUAAUGGGCCGCUGAAAGGGUGCCCGAUAG | 3’ |

| Single stranded messenger RNA | ||

The actual biological transcription process works from the template strand, doing a reverse complement (TCAG → CUGA) to give the mRNA. However, in Biopython and bioinformatics in general, we typically work directly with the coding strand because this means we can get the mRNA sequence just by switching T → U.

Now let’s actually get down to doing a transcription in Biopython. First, let’s create Seq objects for the coding and template DNA strands:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> coding_dna = Seq("ATGGCCATTGTAATGGGCCGCTGAAAGGGTGCCCGATAG", IUPAC.unambiguous_dna)

>>> coding_dna

Seq('ATGGCCATTGTAATGGGCCGCTGAAAGGGTGCCCGATAG', IUPACUnambiguousDNA())

>>> template_dna = coding_dna.reverse_complement()

>>> template_dna

Seq('CTATCGGGCACCCTTTCAGCGGCCCATTACAATGGCCAT', IUPACUnambiguousDNA())

These should match the figure above - remember by convention nucleotide sequences are normally read from the 5’ to 3’ direction, while in the figure the template strand is shown reversed.

Now let’s transcribe the coding strand into the corresponding mRNA, using the Seq object’s built in transcribe method:

>>> coding_dna

Seq('ATGGCCATTGTAATGGGCCGCTGAAAGGGTGCCCGATAG', IUPACUnambiguousDNA())

>>> messenger_rna = coding_dna.transcribe()

>>> messenger_rna

Seq('AUGGCCAUUGUAAUGGGCCGCUGAAAGGGUGCCCGAUAG', IUPACUnambiguousRNA())

As you can see, all this does is switch T → U, and adjust the alphabet.

If you do want to do a true biological transcription starting with the template strand, then this becomes a two-step process:

>>> template_dna.reverse_complement().transcribe()

Seq('AUGGCCAUUGUAAUGGGCCGCUGAAAGGGUGCCCGAUAG', IUPACUnambiguousRNA())

The Seq object also includes a back-transcription method for going from the mRNA to the coding strand of the DNA. Again, this is a simple U → T substitution and associated change of alphabet:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> messenger_rna = Seq("AUGGCCAUUGUAAUGGGCCGCUGAAAGGGUGCCCGAUAG", IUPAC.unambiguous_rna)

>>> messenger_rna

Seq('AUGGCCAUUGUAAUGGGCCGCUGAAAGGGUGCCCGAUAG', IUPACUnambiguousRNA())

>>> messenger_rna.back_transcribe()

Seq('ATGGCCATTGTAATGGGCCGCTGAAAGGGTGCCCGATAG', IUPACUnambiguousDNA())

Note: The Seq object’s transcribe and back_transcribe methods

were added in Biopython 1.49. For older releases you would have to use the Bio.Seq

module’s functions instead, see Section 3.14.

Sticking with the same example discussed in the transcription section above,

now let’s translate this mRNA into the corresponding protein sequence - again taking

advantage of one of the Seq object’s biological methods:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> messenger_rna = Seq("AUGGCCAUUGUAAUGGGCCGCUGAAAGGGUGCCCGAUAG", IUPAC.unambiguous_rna)

>>> messenger_rna

Seq('AUGGCCAUUGUAAUGGGCCGCUGAAAGGGUGCCCGAUAG', IUPACUnambiguousRNA())

>>> messenger_rna.translate()

Seq('MAIVMGR*KGAR*', HasStopCodon(IUPACProtein(), '*'))

You can also translate directly from the coding strand DNA sequence:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> coding_dna = Seq("ATGGCCATTGTAATGGGCCGCTGAAAGGGTGCCCGATAG", IUPAC.unambiguous_dna)

>>> coding_dna

Seq('ATGGCCATTGTAATGGGCCGCTGAAAGGGTGCCCGATAG', IUPACUnambiguousDNA())

>>> coding_dna.translate()

Seq('MAIVMGR*KGAR*', HasStopCodon(IUPACProtein(), '*'))

You should notice in the above protein sequences that in addition to the end stop character, there is an internal stop as well. This was a deliberate choice of example, as it gives an excuse to talk about some optional arguments, including different translation tables (Genetic Codes).

The translation tables available in Biopython are based on those from the NCBI (see the next section of this tutorial). By default, translation will use the standard genetic code (NCBI table id 1). Suppose we are dealing with a mitochondrial sequence. We need to tell the translation function to use the relevant genetic code instead:

>>> coding_dna.translate(table="Vertebrate Mitochondrial")

Seq('MAIVMGRWKGAR*', HasStopCodon(IUPACProtein(), '*'))

You can also specify the table using the NCBI table number which is shorter, and often included in the feature annotation of GenBank files:

>>> coding_dna.translate(table=2)

Seq('MAIVMGRWKGAR*', HasStopCodon(IUPACProtein(), '*'))

Now, you may want to translate the nucleotides up to the first in frame stop codon, and then stop (as happens in nature):

>>> coding_dna.translate()

Seq('MAIVMGR*KGAR*', HasStopCodon(IUPACProtein(), '*'))

>>> coding_dna.translate(to_stop=True)

Seq('MAIVMGR', IUPACProtein())

>>> coding_dna.translate(table=2)

Seq('MAIVMGRWKGAR*', HasStopCodon(IUPACProtein(), '*'))

>>> coding_dna.translate(table=2, to_stop=True)

Seq('MAIVMGRWKGAR', IUPACProtein())

Notice that when you use the to_stop argument, the stop codon itself

is not translated - and the stop symbol is not included at the end of your protein

sequence.

You can even specify the stop symbol if you don’t like the default asterisk:

>>> coding_dna.translate(table=2, stop_symbol="@")

Seq('MAIVMGRWKGAR@', HasStopCodon(IUPACProtein(), '@'))

Now, suppose you have a complete coding sequence CDS, which is to say a

nucleotide sequence (e.g. mRNA – after any splicing) which is a whole number

of codons (i.e. the length is a multiple of three), commences with a start

codon, ends with a stop codon, and has no internal in-frame stop codons.

In general, given a complete CDS, the default translate method will do what

you want (perhaps with the to_stop option). However, what if your

sequence uses a non-standard start codon? This happens a lot in bacteria –

for example the gene yaaX in E. coli K12:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import generic_dna

>>> gene = Seq("GTGAAAAAGATGCAATCTATCGTACTCGCACTTTCCCTGGTTCTGGTCGCTCCCATGGCA" + \

... "GCACAGGCTGCGGAAATTACGTTAGTCCCGTCAGTAAAATTACAGATAGGCGATCGTGAT" + \

... "AATCGTGGCTATTACTGGGATGGAGGTCACTGGCGCGACCACGGCTGGTGGAAACAACAT" + \

... "TATGAATGGCGAGGCAATCGCTGGCACCTACACGGACCGCCGCCACCGCCGCGCCACCAT" + \

... "AAGAAAGCTCCTCATGATCATCACGGCGGTCATGGTCCAGGCAAACATCACCGCTAA",

... generic_dna)

>>> gene.translate(table="Bacterial")

Seq('VKKMQSIVLALSLVLVAPMAAQAAEITLVPSVKLQIGDRDNRGYYWDGGHWRDH...HR*',

HasStopCodon(ExtendedIUPACProtein(), '*')

>>> gene.translate(table="Bacterial", to_stop=True)

Seq('VKKMQSIVLALSLVLVAPMAAQAAEITLVPSVKLQIGDRDNRGYYWDGGHWRDH...HHR',

ExtendedIUPACProtein())

In the bacterial genetic code GTG is a valid start codon, and while it does normally encode Valine, if used as a start codon it should be translated as methionine. This happens if you tell Biopython your sequence is a complete CDS:

>>> gene.translate(table="Bacterial", cds=True)

Seq('MKKMQSIVLALSLVLVAPMAAQAAEITLVPSVKLQIGDRDNRGYYWDGGHWRDH...HHR',

ExtendedIUPACProtein())

In addition to telling Biopython to translate an alternative start codon as methionine, using this option also makes sure your sequence really is a valid CDS (you’ll get an exception if not).

The example in Section 18.1.3 combines the Seq object’s

translate method with Bio.SeqIO for sequence input/output.

In the previous sections we talked about the Seq object translation method (and mentioned the equivalent function in the Bio.Seq module – see

Section 3.14).

Internally these use codon table objects derived from the NCBI information at

ftp://ftp.ncbi.nlm.nih.gov/entrez/misc/data/gc.prt, also shown on

http://www.ncbi.nlm.nih.gov/Taxonomy/Utils/wprintgc.cgi in a much more readable layout.

As before, let’s just focus on two choices: the Standard translation table, and the translation table for Vertebrate Mitochondrial DNA.

>>> from Bio.Data import CodonTable >>> standard_table = CodonTable.unambiguous_dna_by_name["Standard"] >>> mito_table = CodonTable.unambiguous_dna_by_name["Vertebrate Mitochondrial"]

Alternatively, these tables are labeled with ID numbers 1 and 2, respectively:

>>> from Bio.Data import CodonTable >>> standard_table = CodonTable.unambiguous_dna_by_id[1] >>> mito_table = CodonTable.unambiguous_dna_by_id[2]

You can compare the actual tables visually by printing them:

>>> print(standard_table) Table 1 Standard, SGC0 | T | C | A | G | --+---------+---------+---------+---------+-- T | TTT F | TCT S | TAT Y | TGT C | T T | TTC F | TCC S | TAC Y | TGC C | C T | TTA L | TCA S | TAA Stop| TGA Stop| A T | TTG L(s)| TCG S | TAG Stop| TGG W | G --+---------+---------+---------+---------+-- C | CTT L | CCT P | CAT H | CGT R | T C | CTC L | CCC P | CAC H | CGC R | C C | CTA L | CCA P | CAA Q | CGA R | A C | CTG L(s)| CCG P | CAG Q | CGG R | G --+---------+---------+---------+---------+-- A | ATT I | ACT T | AAT N | AGT S | T A | ATC I | ACC T | AAC N | AGC S | C A | ATA I | ACA T | AAA K | AGA R | A A | ATG M(s)| ACG T | AAG K | AGG R | G --+---------+---------+---------+---------+-- G | GTT V | GCT A | GAT D | GGT G | T G | GTC V | GCC A | GAC D | GGC G | C G | GTA V | GCA A | GAA E | GGA G | A G | GTG V | GCG A | GAG E | GGG G | G --+---------+---------+---------+---------+--

and:

>>> print(mito_table) Table 2 Vertebrate Mitochondrial, SGC1 | T | C | A | G | --+---------+---------+---------+---------+-- T | TTT F | TCT S | TAT Y | TGT C | T T | TTC F | TCC S | TAC Y | TGC C | C T | TTA L | TCA S | TAA Stop| TGA W | A T | TTG L | TCG S | TAG Stop| TGG W | G --+---------+---------+---------+---------+-- C | CTT L | CCT P | CAT H | CGT R | T C | CTC L | CCC P | CAC H | CGC R | C C | CTA L | CCA P | CAA Q | CGA R | A C | CTG L | CCG P | CAG Q | CGG R | G --+---------+---------+---------+---------+-- A | ATT I(s)| ACT T | AAT N | AGT S | T A | ATC I(s)| ACC T | AAC N | AGC S | C A | ATA M(s)| ACA T | AAA K | AGA Stop| A A | ATG M(s)| ACG T | AAG K | AGG Stop| G --+---------+---------+---------+---------+-- G | GTT V | GCT A | GAT D | GGT G | T G | GTC V | GCC A | GAC D | GGC G | C G | GTA V | GCA A | GAA E | GGA G | A G | GTG V(s)| GCG A | GAG E | GGG G | G --+---------+---------+---------+---------+--

You may find these following properties useful – for example if you are trying to do your own gene finding:

>>> mito_table.stop_codons ['TAA', 'TAG', 'AGA', 'AGG'] >>> mito_table.start_codons ['ATT', 'ATC', 'ATA', 'ATG', 'GTG'] >>> mito_table.forward_table["ACG"] 'T'

Sequence comparison is actually a very complicated topic, and there is no easy

way to decide if two sequences are equal. The basic problem is the meaning of

the letters in a sequence are context dependent - the letter “A” could be part

of a DNA, RNA or protein sequence. Biopython uses alphabet objects as part of

each Seq object to try and capture this information - so comparing two

Seq objects means considering both the sequence strings and the

alphabets.

For example, you might argue that the two DNA Seq objects

Seq("ACGT", IUPAC.unambiguous_dna) and

Seq("ACGT", IUPAC.ambiguous_dna) should be equal, even though

they do have different alphabets. Depending on the context this could be

important.

This gets worse – suppose you think Seq("ACGT",

IUPAC.unambiguous_dna) and Seq("ACGT") (i.e. the default generic

alphabet) should be equal. Then, logically, Seq("ACGT", IUPAC.protein)

and Seq("ACGT") should also be equal. Now, in logic if A=B and

B=C, by transitivity we expect A=C. So for logical consistency we’d

require Seq("ACGT", IUPAC.unambiguous_dna) and Seq("ACGT",

IUPAC.protein) to be equal – which most people would agree is just not right.

This transitivity problem would also have implications for using Seq

objects as Python dictionary keys.

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> seq1 = Seq("ACGT", IUPAC.unambiguous_dna)

>>> seq2 = Seq("ACGT", IUPAC.unambiguous_dna)

So, what does Biopython do? Well, the equality test is the default for Python objects – it tests to see if they are the same object in memory. This is a very strict test:

>>> seq1 == seq2 False >>> seq1 == seq1 True

If you actually want to do this, you can be more explicit by using the Python

id function,

>>> id(seq1) == id(seq2) False >>> id(seq1) == id(seq1) True

Now, in every day use, your sequences will probably all have the same alphabet, or at least all be the same type of sequence (all DNA, all RNA, or all protein). What you probably want is to just compare the sequences as strings – so do this explicitly:

>>> str(seq1) == str(seq2) True >>> str(seq1) == str(seq1) True

As an extension to this, while you can use a Python dictionary with

Seq objects as keys, it is generally more useful to use the sequence a

string for the key. See also Section 3.4.

Just like the normal Python string, the Seq object is “read only”, or in Python terminology, immutable. Apart from wanting the Seq object to act like a string, this is also a useful default since in many biological applications you want to ensure you are not changing your sequence data:

>>> from Bio.Seq import Seq

>>> from Bio.Alphabet import IUPAC

>>> my_seq = Seq("GCCATTGTAATGGGCCGCTGAAAGGGTGCCCGA", IUPAC.unambiguous_dna)

Observe what happens if you try to edit the sequence:

>>> my_seq[5] = "G" Traceback (most recent call last): ... TypeError: 'Seq' object does not support item assignment

However, you can convert it into a mutable sequence (a MutableSeq object) and do pretty much anything you want with it:

>>> mutable_seq = my_seq.tomutable()

>>> mutable_seq

MutableSeq('GCCATTGTAATGGGCCGCTGAAAGGGTGCCCGA', IUPACUnambiguousDNA())

Alternatively, you can create a MutableSeq object directly from a string:

>>> from Bio.Seq import MutableSeq

>>> from Bio.Alphabet import IUPAC

>>> mutable_seq = MutableSeq("GCCATTGTAATGGGCCGCTGAAAGGGTGCCCGA", IUPAC.unambiguous_dna)

Either way will give you a sequence object which can be changed:

>>> mutable_seq

MutableSeq('GCCATTGTAATGGGCCGCTGAAAGGGTGCCCGA', IUPACUnambiguousDNA())

>>> mutable_seq[5] = "C"

>>> mutable_seq

MutableSeq('GCCATCGTAATGGGCCGCTGAAAGGGTGCCCGA', IUPACUnambiguousDNA())

>>> mutable_seq.remove("T")

>>> mutable_seq

MutableSeq('GCCACGTAATGGGCCGCTGAAAGGGTGCCCGA', IUPACUnambiguousDNA())

>>> mutable_seq.reverse()

>>> mutable_seq

MutableSeq('AGCCCGTGGGAAAGTCGCCGGGTAATGCACCG', IUPACUnambiguousDNA())

Do note that unlike the Seq object, the MutableSeq object’s methods like reverse_complement() and reverse() act in-situ!

An important technical difference between mutable and immutable objects in Python means that you can’t use a MutableSeq object as a dictionary key, but you can use a Python string or a Seq object in this way.

Once you have finished editing your a MutableSeq object, it’s easy to get back to a read-only Seq object should you need to:

>>> new_seq = mutable_seq.toseq()

>>> new_seq

Seq('AGCCCGTGGGAAAGTCGCCGGGTAATGCACCG', IUPACUnambiguousDNA())

You can also get a string from a MutableSeq object just like from a Seq object (Section 3.4).

The UnknownSeq object is a subclass of the basic Seq object

and its purpose is to represent a

sequence where we know the length, but not the actual letters making it up.

You could of course use a normal Seq object in this situation, but it wastes

rather a lot of memory to hold a string of a million “N” characters when you could

just store a single letter “N” and the desired length as an integer.

>>> from Bio.Seq import UnknownSeq >>> unk = UnknownSeq(20) >>> unk UnknownSeq(20, alphabet = Alphabet(), character = '?') >>> print(unk) ???????????????????? >>> len(unk) 20

You can of course specify an alphabet, meaning for nucleotide sequences the letter defaults to “N” and for proteins “X”, rather than just “?”.

>>> from Bio.Seq import UnknownSeq >>> from Bio.Alphabet import IUPAC >>> unk_dna = UnknownSeq(20, alphabet=IUPAC.ambiguous_dna) >>> unk_dna UnknownSeq(20, alphabet = IUPACAmbiguousDNA(), character = 'N') >>> print(unk_dna) NNNNNNNNNNNNNNNNNNNN

You can use all the usual Seq object methods too, note these give back

memory saving UnknownSeq objects where appropriate as you might expect:

>>> unk_dna UnknownSeq(20, alphabet = IUPACAmbiguousDNA(), character = 'N') >>> unk_dna.complement() UnknownSeq(20, alphabet = IUPACAmbiguousDNA(), character = 'N') >>> unk_dna.reverse_complement() UnknownSeq(20, alphabet = IUPACAmbiguousDNA(), character = 'N') >>> unk_dna.transcribe() UnknownSeq(20, alphabet = IUPACAmbiguousRNA(), character = 'N') >>> unk_protein = unk_dna.translate() >>> unk_protein UnknownSeq(6, alphabet = ProteinAlphabet(), character = 'X') >>> print(unk_protein) XXXXXX >>> len(unk_protein) 6

You may be able to find a use for the UnknownSeq object in your own

code, but it is more likely that you will first come across them in a

SeqRecord object created by Bio.SeqIO

(see Chapter 5).

Some sequence file formats don’t always include the actual sequence, for

example GenBank and EMBL files may include a list of features but for the

sequence just present the contig information. Alternatively, the QUAL files

used in sequencing work hold quality scores but they never contain a

sequence – instead there is a partner FASTA file which does have the

sequence.

To close this chapter, for those you who really don’t want to use the sequence

objects (or who prefer a functional programming style to an object orientated one),

there are module level functions in Bio.Seq will accept plain Python strings,

Seq objects (including UnknownSeq objects) or MutableSeq objects:

>>> from Bio.Seq import reverse_complement, transcribe, back_transcribe, translate >>> my_string = "GCTGTTATGGGTCGTTGGAAGGGTGGTCGTGCTGCTGGTTAG" >>> reverse_complement(my_string) 'CTAACCAGCAGCACGACCACCCTTCCAACGACCCATAACAGC' >>> transcribe(my_string) 'GCUGUUAUGGGUCGUUGGAAGGGUGGUCGUGCUGCUGGUUAG' >>> back_transcribe(my_string) 'GCTGTTATGGGTCGTTGGAAGGGTGGTCGTGCTGCTGGTTAG' >>> translate(my_string) 'AVMGRWKGGRAAG*'

You are, however, encouraged to work with Seq objects by default.

Chapter 3 introduced the sequence classes. Immediately “above” the Seq class is the Sequence Record or SeqRecord class, defined in the Bio.SeqRecord module. This class allows higher level features such as identifiers and features (as SeqFeature objects) to be associated with the sequence, and is used throughout the sequence input/output interface Bio.SeqIO described fully in Chapter 5.

If you are only going to be working with simple data like FASTA files, you can probably skip this chapter for now. If on the other hand you are going to be using richly annotated sequence data, say from GenBank or EMBL files, this information is quite important.

While this chapter should cover most things to do with the SeqRecord and SeqFeature objects in this chapter, you may also want to read the SeqRecord wiki page (http://biopython.org/wiki/SeqRecord), and the built in documentation (also online – SeqRecord and SeqFeature):

>>> from Bio.SeqRecord import SeqRecord >>> help(SeqRecord) ...

The SeqRecord (Sequence Record) class is defined in the Bio.SeqRecord module. This class allows higher level features such as identifiers and features to be associated with a sequence (see Chapter 3), and is the basic data type for the Bio.SeqIO sequence input/output interface (see Chapter 5).

The SeqRecord class itself is quite simple, and offers the following information as attributes:

Seq object.SeqFeature objects with more structured information about the features on a sequence (e.g. position of genes on a genome, or domains on a protein sequence). The structure of sequence features is described below in Section 4.3.Using a SeqRecord object is not very complicated, since all of the

information is presented as attributes of the class. Usually you won’t create

a SeqRecord “by hand”, but instead use Bio.SeqIO to read in a

sequence file for you (see Chapter 5 and the examples

below). However, creating SeqRecord can be quite simple.

To create a SeqRecord at a minimum you just need a Seq object:

>>> from Bio.Seq import Seq

>>> simple_seq = Seq("GATC")

>>> from Bio.SeqRecord import SeqRecord

>>> simple_seq_r = SeqRecord(simple_seq)

Additionally, you can also pass the id, name and description to the initialization function, but if not they will be set as strings indicating they are unknown, and can be modified subsequently:

>>> simple_seq_r.id

'<unknown id>'

>>> simple_seq_r.id = "AC12345"

>>> simple_seq_r.description = "Made up sequence I wish I could write a paper about"

>>> print(simple_seq_r.description)

Made up sequence I wish I could write a paper about

>>> simple_seq_r.seq

Seq('GATC', Alphabet())

Including an identifier is very important if you want to output your SeqRecord to a file. You would normally include this when creating the object:

>>> from Bio.Seq import Seq

>>> simple_seq = Seq("GATC")

>>> from Bio.SeqRecord import SeqRecord

>>> simple_seq_r = SeqRecord(simple_seq, id="AC12345")

As mentioned above, the SeqRecord has an dictionary attribute annotations. This is used

for any miscellaneous annotations that doesn’t fit under one of the other more specific attributes.

Adding annotations is easy, and just involves dealing directly with the annotation dictionary:

>>> simple_seq_r.annotations["evidence"] = "None. I just made it up."

>>> print(simple_seq_r.annotations)

{'evidence': 'None. I just made it up.'}

>>> print(simple_seq_r.annotations["evidence"])

None. I just made it up.

Working with per-letter-annotations is similar, letter_annotations is a

dictionary like attribute which will let you assign any Python sequence (i.e.

a string, list or tuple) which has the same length as the sequence:

>>> simple_seq_r.letter_annotations["phred_quality"] = [40, 40, 38, 30]

>>> print(simple_seq_r.letter_annotations)

{'phred_quality': [40, 40, 38, 30]}

>>> print(simple_seq_r.letter_annotations["phred_quality"])

[40, 40, 38, 30]

The dbxrefs and features attributes are just Python lists, and

should be used to store strings and SeqFeature objects (discussed later

in this chapter) respectively.

This example uses a fairly large FASTA file containing the whole sequence for Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, originally downloaded from the NCBI. This file is included with the Biopython unit tests under the GenBank folder, or online NC_005816.fna from our website.

The file starts like this - and you can check there is only one record present (i.e. only one line starting with a greater than symbol):

>gi|45478711|ref|NC_005816.1| Yersinia pestis biovar Microtus ... pPCP1, complete sequence TGTAACGAACGGTGCAATAGTGATCCACACCCAACGCCTGAAATCAGATCCAGGGGGTAATCTGCTCTCC ...

Back in Chapter 2 you will have seen the function Bio.SeqIO.parse(...)

used to loop over all the records in a file as SeqRecord objects. The Bio.SeqIO module

has a sister function for use on files which contain just one record which we’ll use here (see Chapter 5 for details):

>>> from Bio import SeqIO

>>> record = SeqIO.read("NC_005816.fna", "fasta")

>>> record

SeqRecord(seq=Seq('TGTAACGAACGGTGCAATAGTGATCCACACCCAACGCCTGAAATCAGATCCAGG...CTG',

SingleLetterAlphabet()), id='gi|45478711|ref|NC_005816.1|', name='gi|45478711|ref|NC_005816.1|',

description='gi|45478711|ref|NC_005816.1| Yersinia pestis biovar Microtus ... sequence',

dbxrefs=[])

Now, let’s have a look at the key attributes of this SeqRecord

individually – starting with the seq attribute which gives you a

Seq object:

>>> record.seq

Seq('TGTAACGAACGGTGCAATAGTGATCCACACCCAACGCCTGAAATCAGATCCAGG...CTG', SingleLetterAlphabet())

Here Bio.SeqIO has defaulted to a generic alphabet, rather

than guessing that this is DNA. If you know in advance what kind of sequence

your FASTA file contains, you can tell Bio.SeqIO which alphabet to use

(see Chapter 5).

Next, the identifiers and description:

>>> record.id 'gi|45478711|ref|NC_005816.1|' >>> record.name 'gi|45478711|ref|NC_005816.1|' >>> record.description 'gi|45478711|ref|NC_005816.1| Yersinia pestis biovar Microtus ... pPCP1, complete sequence'

As you can see above, the first word of the FASTA record’s title line (after

removing the greater than symbol) is used for both the id and

name attributes. The whole title line (after removing the greater than

symbol) is used for the record description. This is deliberate, partly for

backwards compatibility reasons, but it also makes sense if you have a FASTA

file like this:

>Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1 TGTAACGAACGGTGCAATAGTGATCCACACCCAACGCCTGAAATCAGATCCAGGGGGTAATCTGCTCTCC ...

Note that none of the other annotation attributes get populated when reading a FASTA file:

>>> record.dbxrefs

[]

>>> record.annotations

{}

>>> record.letter_annotations

{}

>>> record.features

[]

In this case our example FASTA file was from the NCBI, and they have a fairly well defined set of conventions for formatting their FASTA lines. This means it would be possible to parse this information and extract the GI number and accession for example. However, FASTA files from other sources vary, so this isn’t possible in general.

As in the previous example, we’re going to look at the whole sequence for Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, originally downloaded from the NCBI, but this time as a GenBank file. Again, this file is included with the Biopython unit tests under the GenBank folder, or online NC_005816.gb from our website.

This file contains a single record (i.e. only one LOCUS line) and starts:

LOCUS NC_005816 9609 bp DNA circular BCT 21-JUL-2008

DEFINITION Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, complete

sequence.

ACCESSION NC_005816

VERSION NC_005816.1 GI:45478711

PROJECT GenomeProject:10638

...

Again, we’ll use Bio.SeqIO to read this file in, and the code is almost identical to that for used above for the FASTA file (see Chapter 5 for details):

>>> from Bio import SeqIO

>>> record = SeqIO.read("NC_005816.gb", "genbank")

>>> record

SeqRecord(seq=Seq('TGTAACGAACGGTGCAATAGTGATCCACACCCAACGCCTGAAATCAGATCCAGG...CTG',

IUPACAmbiguousDNA()), id='NC_005816.1', name='NC_005816',

description='Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, complete sequence.',

dbxrefs=['Project:10638'])

You should be able to spot some differences already! But taking the attributes individually,

the sequence string is the same as before, but this time Bio.SeqIO has been able to automatically assign a more specific alphabet (see Chapter 5 for details):

>>> record.seq

Seq('TGTAACGAACGGTGCAATAGTGATCCACACCCAACGCCTGAAATCAGATCCAGG...CTG', IUPACAmbiguousDNA())

The name comes from the LOCUS line, while the id includes the version suffix.

The description comes from the DEFINITION line:

>>> record.id 'NC_005816.1' >>> record.name 'NC_005816' >>> record.description 'Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, complete sequence.'

GenBank files don’t have any per-letter annotations:

>>> record.letter_annotations

{}

Most of the annotations information gets recorded in the annotations dictionary, for example:

>>> len(record.annotations) 11 >>> record.annotations["source"] 'Yersinia pestis biovar Microtus str. 91001'

The dbxrefs list gets populated from any PROJECT or DBLINK lines:

>>> record.dbxrefs ['Project:10638']

Finally, and perhaps most interestingly, all the entries in the features table (e.g. the genes or CDS features) get recorded as SeqFeature objects in the features list.

>>> len(record.features) 29

We’ll talk about SeqFeature objects next, in

Section 4.3.

Sequence features are an essential part of describing a sequence. Once you get beyond the sequence itself, you need some way to organize and easily get at the more “abstract” information that is known about the sequence. While it is probably impossible to develop a general sequence feature class that will cover everything, the Biopython SeqFeature class attempts to encapsulate as much of the information about the sequence as possible. The design is heavily based on the GenBank/EMBL feature tables, so if you understand how they look, you’ll probably have an easier time grasping the structure of the Biopython classes.

The key idea about each SeqFeature object is to describe a region on a parent sequence, typically a SeqRecord object. That region is described with a location object, typically a range between two positions (see Section 4.3.2 below).

The SeqFeature class has a number of attributes, so first we’ll list them and their general features, and then later in the chapter work through examples to show how this applies to a real life example. The attributes of a SeqFeature are:

SeqFeature on the sequence

that you are dealing with, see Section 4.3.2 below. The

SeqFeature delegates much of its functionality to the location

object, and includes a number of shortcut attributes for properties

of the location:.location.ref – any (different)

reference sequence the location is referring to. Usually just None..location.ref_db – specifies

the database any identifier in .ref refers to. Usually just None..location.strand – the strand on

the sequence that the feature is located on. For double stranded nucleotide

sequence this may either be 1 for the top strand, −1 for the bottom

strand, 0 if the strand is important but is unknown, or None

if it doesn’t matter. This is None for proteins, or single stranded sequences.

CompoundLocation object, and should now be ignored.The key idea about each SeqFeature object is to describe a

region on a parent sequence, for which we use a location object,

typically describing a range between two positions. Two try to

clarify the terminology we’re using:

<100 and

>200 are all positions.I just mention this because sometimes I get confused between the two.

Unless you work with eukaryotic genes, most SeqFeature locations are

extremely simple - you just need start and end coordinates and a strand.

That’s essentially all the basic FeatureLocation object does.

In practise of course, things can be more complicated. First of all we have to handle compound locations made up of several regions. Secondly, the positions themselves may be fuzzy (inexact).

Biopython 1.62 introduced the CompoundLocation as part of

a restructuring of how complex locations made up of multiple regions

are represented.

The main usage is for handling ‘join’ locations in EMBL/GenBank files.

So far we’ve only used simple positions. One complication in dealing with feature locations comes in the positions themselves. In biology many times things aren’t entirely certain (as much as us wet lab biologists try to make them certain!). For instance, you might do a dinucleotide priming experiment and discover that the start of mRNA transcript starts at one of two sites. This is very useful information, but the complication comes in how to represent this as a position. To help us deal with this, we have the concept of fuzzy positions. Basically there are several types of fuzzy positions, so we have five classes do deal with them:

position attribute of the object.`<13', signifying that

the real position is located somewhere less than 13. To get

the specified upper boundary, look at the position

attribute of the object.BeforePosition, this

class represents a position that occurs after some specified site.

This is represented in GenBank as `>13', and like

BeforePosition, you get the boundary number by looking

at the position attribute of the object.position attribute

specifies the lower boundary of the range we are looking at, so in

our example case this would be one. The extension attribute

specifies the range to the higher boundary, so in this case it

would be 4. So object.position is the lower boundary and

object.position + object.extension is the upper boundary.Here’s an example where we create a location with fuzzy end points:

>>> from Bio import SeqFeature >>> start_pos = SeqFeature.AfterPosition(5) >>> end_pos = SeqFeature.BetweenPosition(9, left=8, right=9) >>> my_location = SeqFeature.FeatureLocation(start_pos, end_pos)

Note that the details of some of the fuzzy-locations changed in Biopython 1.59, in particular for BetweenPosition and WithinPosition you must now make it explicit which integer position should be used for slicing etc. For a start position this is generally the lower (left) value, while for an end position this would generally be the higher (right) value.

If you print out a FeatureLocation object, you can get a nice representation of the information:

>>> print(my_location) [>5:(8^9)]

We can access the fuzzy start and end positions using the start and end attributes of the location:

>>> my_location.start AfterPosition(5) >>> print(my_location.start) >5 >>> my_location.end BetweenPosition(9, left=8, right=9) >>> print(my_location.end) (8^9)

If you don’t want to deal with fuzzy positions and just want numbers, they are actually subclasses of integers so should work like integers:

>>> int(my_location.start) 5 >>> int(my_location.end) 9

For compatibility with older versions of Biopython you can ask for the

nofuzzy_start and nofuzzy_end attributes of the location

which are plain integers:

>>> my_location.nofuzzy_start 5 >>> my_location.nofuzzy_end 9

Notice that this just gives you back the position attributes of the fuzzy locations.

Similarly, to make it easy to create a position without worrying about fuzzy positions, you can just pass in numbers to the FeaturePosition constructors, and you’ll get back out ExactPosition objects:

>>> exact_location = SeqFeature.FeatureLocation(5, 9) >>> print(exact_location) [5:9] >>> exact_location.start ExactPosition(5) >>> int(exact_location.start) 5 >>> exact_location.nofuzzy_start 5

That is most of the nitty gritty about dealing with fuzzy positions in Biopython. It has been designed so that dealing with fuzziness is not that much more complicated than dealing with exact positions, and hopefully you find that true!

You can use the Python keyword in with a SeqFeature or location

object to see if the base/residue for a parent coordinate is within the

feature/location or not.

For example, suppose you have a SNP of interest and you want to know which features this SNP is within, and lets suppose this SNP is at index 4350 (Python counting!). Here is a simple brute force solution where we just check all the features one by one in a loop:

>>> from Bio import SeqIO

>>> my_snp = 4350

>>> record = SeqIO.read("NC_005816.gb", "genbank")

>>> for feature in record.features:

... if my_snp in feature:

... print("%s %s" % (feature.type, feature.qualifiers.get('db_xref')))

...

source ['taxon:229193']

gene ['GeneID:2767712']

CDS ['GI:45478716', 'GeneID:2767712']

Note that gene and CDS features from GenBank or EMBL files defined with joins are the union of the exons – they do not cover any introns.

A SeqFeature or location object doesn’t directly contain a sequence, instead the location (see Section 4.3.2) describes how to get this from the parent sequence. For example consider a (short) gene sequence with location 5:18 on the reverse strand, which in GenBank/EMBL notation using 1-based counting would be complement(6..18), like this:

>>> from Bio.Seq import Seq

>>> from Bio.SeqFeature import SeqFeature, FeatureLocation

>>> example_parent = Seq("ACCGAGACGGCAAAGGCTAGCATAGGTATGAGACTTCCTTCCTGCCAGTGCTGAGGAACTGGGAGCCTAC")

>>> example_feature = SeqFeature(FeatureLocation(5, 18), type="gene", strand=-1)

You could take the parent sequence, slice it to extract 5:18, and then take the reverse complement. If you are using Biopython 1.59 or later, the feature location’s start and end are integer like so this works:

>>> feature_seq = example_parent[example_feature.location.start:example_feature.location.end].reverse_complement() >>> print(feature_seq) AGCCTTTGCCGTC

This is a simple example so this isn’t too bad – however once you have to deal with compound features (joins) this is rather messy. Instead, the SeqFeature object has an extract method to take care of all this:

>>> feature_seq = example_feature.extract(example_parent) >>> print(feature_seq) AGCCTTTGCCGTC

The length of a SeqFeature or location matches

that of the region of sequence it describes.

>>> print(example_feature.extract(example_parent)) AGCCTTTGCCGTC >>> print(len(example_feature.extract(example_parent))) 13 >>> print(len(example_feature)) 13 >>> print(len(example_feature.location)) 13

For simple FeatureLocation objects the length is just

the difference between the start and end positions. However,

for a CompoundLocation the length is the sum of the

constituent regions.

Another common annotation related to a sequence is a reference to a journal or other published work dealing with the sequence. We have a fairly simple way of representing a Reference in Biopython – we have a Bio.SeqFeature.Reference class that stores the relevant information about a reference as attributes of an object.

The attributes include things that you would expect to see in a reference like journal, title and authors. Additionally, it also can hold the medline_id and pubmed_id and a comment about the reference. These are all accessed simply as attributes of the object.

A reference also has a location object so that it can specify a particular location on the sequence that the reference refers to. For instance, you might have a journal that is dealing with a particular gene located on a BAC, and want to specify that it only refers to this position exactly. The location is a potentially fuzzy location, as described in section 4.3.2.

Any reference objects are stored as a list in the SeqRecord object’s annotations dictionary under the key “references”.

That’s all there is too it. References are meant to be easy to deal with, and hopefully general enough to cover lots of usage cases.

The format() method of the SeqRecord class gives a string

containing your record formatted using one of the output file formats

supported by Bio.SeqIO, such as FASTA:

from Bio.Seq import Seq

from Bio.SeqRecord import SeqRecord

from Bio.Alphabet import generic_protein

record = SeqRecord(Seq("MMYQQGCFAGGTVLRLAKDLAENNRGARVLVVCSEITAVTFRGPSETHLDSMVGQALFGD" \

+"GAGAVIVGSDPDLSVERPLYELVWTGATLLPDSEGAIDGHLREVGLTFHLLKDVPGLISK" \

+"NIEKSLKEAFTPLGISDWNSTFWIAHPGGPAILDQVEAKLGLKEEKMRATREVLSEYGNM" \

+"SSAC", generic_protein),

id="gi|14150838|gb|AAK54648.1|AF376133_1",

description="chalcone synthase [Cucumis sativus]")

print(record.format("fasta"))

which should give:

>gi|14150838|gb|AAK54648.1|AF376133_1 chalcone synthase [Cucumis sativus] MMYQQGCFAGGTVLRLAKDLAENNRGARVLVVCSEITAVTFRGPSETHLDSMVGQALFGD GAGAVIVGSDPDLSVERPLYELVWTGATLLPDSEGAIDGHLREVGLTFHLLKDVPGLISK NIEKSLKEAFTPLGISDWNSTFWIAHPGGPAILDQVEAKLGLKEEKMRATREVLSEYGNM SSAC

This format method takes a single mandatory argument, a lower case string which is

supported by Bio.SeqIO as an output format (see Chapter 5).

However, some of the file formats Bio.SeqIO can write to require more than

one record (typically the case for multiple sequence alignment formats), and thus won’t

work via this format() method. See also Section 5.5.4.

You can slice a SeqRecord, to give you a new SeqRecord covering just

part of the sequence. What is important

here is that any per-letter annotations are also sliced, and any features which fall

completely within the new sequence are preserved (with their locations adjusted).

For example, taking the same GenBank file used earlier:

>>> from Bio import SeqIO

>>> record = SeqIO.read("NC_005816.gb", "genbank")

>>> record

SeqRecord(seq=Seq('TGTAACGAACGGTGCAATAGTGATCCACACCCAACGCCTGAAATCAGATCCAGG...CTG',

IUPACAmbiguousDNA()), id='NC_005816.1', name='NC_005816',

description='Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, complete sequence.',

dbxrefs=['Project:10638'])

>>> len(record) 9609 >>> len(record.features) 41

For this example we’re going to focus in on the pim gene, YP_pPCP05.

If you have a look at the GenBank file directly you’ll find this gene/CDS has

location string 4343..4780, or in Python counting 4342:4780.

From looking at the file you can work out that these are the twelfth and

thirteenth entries in the file, so in Python zero-based counting they are

entries 11 and 12 in the features list:

>>> print(record.features[20])

type: gene

location: [4342:4780](+)

qualifiers:

Key: db_xref, Value: ['GeneID:2767712']

Key: gene, Value: ['pim']

Key: locus_tag, Value: ['YP_pPCP05']

<BLANKLINE>

>>> print(record.features[21])

type: CDS

location: [4342:4780](+)

qualifiers:

Key: codon_start, Value: ['1']

Key: db_xref, Value: ['GI:45478716', 'GeneID:2767712']

Key: gene, Value: ['pim']

Key: locus_tag, Value: ['YP_pPCP05']

Key: note, Value: ['similar to many previously sequenced pesticin immunity ...']

Key: product, Value: ['pesticin immunity protein']

Key: protein_id, Value: ['NP_995571.1']

Key: transl_table, Value: ['11']

Key: translation, Value: ['MGGGMISKLFCLALIFLSSSGLAEKNTYTAKDILQNLELNTFGNSLSH...']

Let’s slice this parent record from 4300 to 4800 (enough to include the pim

gene/CDS), and see how many features we get:

>>> sub_record = record[4300:4800]

>>> sub_record

SeqRecord(seq=Seq('ATAAATAGATTATTCCAAATAATTTATTTATGTAAGAACAGGATGGGAGGGGGA...TTA',

IUPACAmbiguousDNA()), id='NC_005816.1', name='NC_005816',

description='Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, complete sequence.',

dbxrefs=[])

>>> len(sub_record) 500 >>> len(sub_record.features) 2

Our sub-record just has two features, the gene and CDS entries for YP_pPCP05:

>>> print(sub_record.features[0])

type: gene

location: [42:480](+)

qualifiers:

Key: db_xref, Value: ['GeneID:2767712']

Key: gene, Value: ['pim']

Key: locus_tag, Value: ['YP_pPCP05']

<BLANKLINE>

>>> print(sub_record.features[20])

type: CDS

location: [42:480](+)

qualifiers:

Key: codon_start, Value: ['1']

Key: db_xref, Value: ['GI:45478716', 'GeneID:2767712']

Key: gene, Value: ['pim']

Key: locus_tag, Value: ['YP_pPCP05']

Key: note, Value: ['similar to many previously sequenced pesticin immunity ...']

Key: product, Value: ['pesticin immunity protein']

Key: protein_id, Value: ['NP_995571.1']

Key: transl_table, Value: ['11']

Key: translation, Value: ['MGGGMISKLFCLALIFLSSSGLAEKNTYTAKDILQNLELNTFGNSLSH...']

Notice that their locations have been adjusted to reflect the new parent sequence!

While Biopython has done something sensible and hopefully intuitive with the features (and any per-letter annotation), for the other annotation it is impossible to know if this still applies to the sub-sequence or not. To avoid guessing, the annotations and dbxrefs are omitted from the sub-record, and it is up to you to transfer any relevant information as appropriate.

>>> sub_record.annotations

{}

>>> sub_record.dbxrefs

[]

The same point could be made about the record id, name and description, but for practicality these are preserved:

>>> sub_record.id 'NC_005816.1' >>> sub_record.name 'NC_005816' >>> sub_record.description 'Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, complete sequence.'

This illustrates the problem nicely though, our new sub-record is not the complete sequence of the plasmid, so the description is wrong! Let’s fix this and then view the sub-record as a reduced GenBank file using the format method described above in Section 4.5:

>>> sub_record.description = "Yersinia pestis biovar Microtus str. 91001 plasmid pPCP1, partial."

>>> print(sub_record.format("genbank"))

...

See Sections 18.1.7 and 18.1.8 for some FASTQ examples where the per-letter annotations (the read quality scores) are also sliced.

You can add SeqRecord objects together, giving a new SeqRecord.

What is important here is that any common

per-letter annotations are also added, all the features are preserved (with their

locations adjusted), and any other common annotation is also kept (like the id, name

and description).

For an example with per-letter annotation, we’ll use the first record in a

FASTQ file. Chapter 5 will explain the SeqIO functions:

>>> from Bio import SeqIO

>>> record = next(SeqIO.parse("example.fastq", "fastq"))

>>> len(record)

25